Yael Argaman

Research Assistant at Zvi Meitar Institute of Emerging Technologies

Dov Greenbaum

Head of Zvi Meitar Institute of Emerging Technologies

As a society you would we expect that we would trust professional judges to balance all of the variables and externalities and provide a fair and just ruling. Yet, recent data suggests that only around 40% of Americans believe that judges are trustworthy, similar to clergy and cops, but less than doctors, pharmacists, grade school teachers, military officers and nurses.

Would we have more faith in our judicial systems if instead of judges deciding cases based on their own knowledge and intuition, they use complex software to decide and sentence. This software could be designed to profile character traits and analyze ostensibly objective details to provide a trustworthy analysis of the crime, possible guilt, mitigating factors and potential sentence.

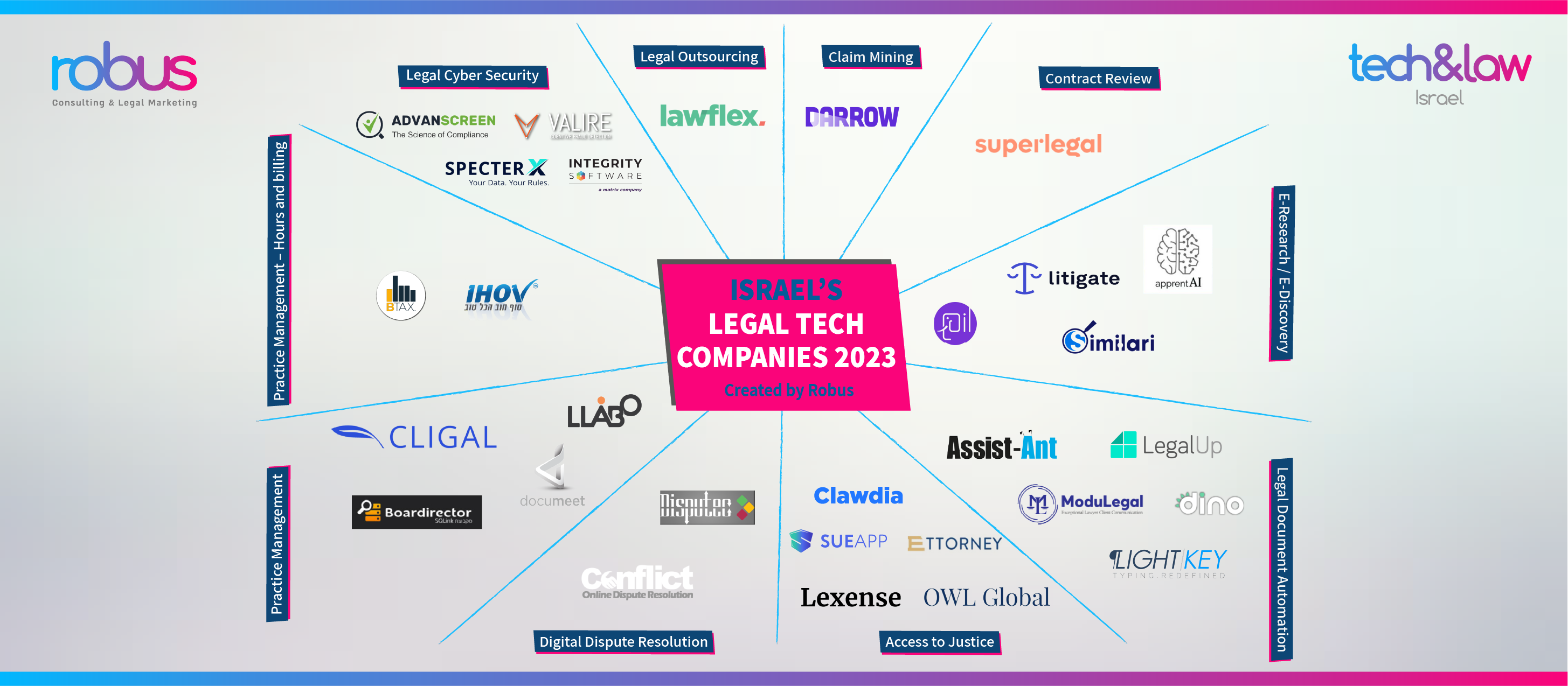

This technology exists. Predictive analytic judicial tools are designed to utilize artificial intelligence (AI), like machine learning, to compute multifaceted statistical prediction models that seek to analyze all the possibly relevant data to create a reliable risk profile that will inform the final judgement. Similar technologies are also employed in many areas of life including: financial services, social networking, healthcare, marketing, even predicting future crime.

Given the seemingly many advantages to using this technology, it makes sense that it’s already in use in some courts in the United States. At its core, its proponents hope that it will reduce ingrained and unconscious judicial bias: It is known that factors such as race, sex, and even just an overall physical appearance of the defendant, can influence the verdict, intentionally or not.

Once an AI verdict is produced, it can be used in at least two possible ways: either as the sole source of the final judgment, or simply an anchor point for the final judgment, but an important one that will inform the judge. In either case it is hoped that the technology will provide for more fair, objective and consistent results, and hopefully eventually even increasing the credibility of the judicial system in the public eye.

However, the usage of this technology raises various ethical and legal issues that ought to be surmounted before that can happen. There are real concerns that the underlying data might actually preserve historical discriminations and existing bias; algorithms are often based on existing data collected that inherently include biased information. The machine learning algorithms may seem to be taking into account myriad and even novel factors, but some academics have argued that they are simply proxies for our biases associated with race and socioeconomic status.

Compounding this concern is that much of the machine learning number crunching is unknowable. We might know how various factors are weighted by the machine, but it’s likely that we won’t understand why. Moreover, often times these software solutions are provided to the courts by for profit companies that justifiably seek to keep their intellectual property secret, effectively requiring society to trust a black box within their judicial system, effectively hampering judicial due process.

This lack of transparency regarding the specific criteria that AI analyzes in its determination of a verdict might result in even less confidence in the judicial system. But, on the flip side, providing access to the algorithms may lead to both the prosecution and defense attempting to game the system.

One potential solution might have courts running their algorithms on retrospective data and confirming that the results are not biased or that they conform with expectations. Another solution may require that a small subset of trusted individuals be informed as to how the system works and they will be able to verify to the public that the methods are not biased. Maybe we should ask the pharmacists or school teachers to help?

This Article was published with regards to our collaboration with the Zvi Meitar institute for emerging technologies.

The Zvi Meitar institute is broadly interested in looking at ethical, economic, social and legal implications of new and emerging technologies both in hi-tech and in biotech. Within this broad range of technologies, the institute will have a particular focus on disruptive technologies such as legal technology.